In reality, other people aren’t thinking about you nearly as much as you intuitively think they are.

The Scout Mindset is by Julia Galef. The subtitle is “Why Some People See Things Clearly and Others Don’t”.

I’ve been interested in better decision making and Rationalism for years, so I read Julia Galef’s book as a supplement to the outlook she and I already share, and not because I expected to learn lots of new things. This is a “comfort zone” book, not a “completely change my way of thinking” book. She writes in a readable style; while similar ideas are shared on sites like Less Wrong, she explains them in way that are free of technical in-group jargon.

The book’s title comes from the metaphor of scouts and soldiers applied to the subject of thinking, learning, and communicating/debating with other people. A soldier attacks to win new ground and defends their turf. A scout, on the other hand, wants to find out the truth. If they go out scouting and see the enemy outnumbers their side, or sees that a bridge that they were hoping to cross has been washed away in a flood, well, yes that’s bad news, but they’d rather know it than not know it. A scout does their job by finding and reporting the truth. Accurate knowledge of what’s out there is useful, whether it’s good news or bad. Julia Galef argues we should be like the scout, not the soldier.

Be Confident that the World is Messy

My favorite part of the book was the discussion of “uncertainty”:

When people claim that “admitting uncertainty” makes you look bad, they’re invariably conflating these two very different kinds of uncertainty: uncertainty “in you,” caused by your own ignorance or lack of experience, and uncertainty “in the world,” caused by the fact that reality is messy and unpredictable.

I really like this as effective communication advice. As someone with experience, I can be certain about the fact that the world is uncertain. She uses Benjamin Franklin as an example: “In his autobiography, an elderly Franklin reflects on his life and marvels at how effective his habit of speaking with “modest diffidence” turned out to be…”. Later she phrases it another way: “I don’t need to appear certain—because if I can’t be sure of the answer, no one can be.”

When I was younger, I used to answer “I don’t know” to a lot of questions. And well, yes it was literally true that I didn’t know. But I used that phrase as a way to say nothing else, when friends were just trying to make conversation with me. Over the years, I’ve gotten better at sharing opinions while at the same time being comfortable that there’s a lot I don’t know. Though I made that progress before The Scout Mindset was written, I like this section because it gives me a solid way to think about my progress – I still believe the world is messy, but now I feel comfortable being able to talk about that messiness, since I accept it and my knowledge of it.

(I still say “I don’t know” a lot, but I try to use it to qualify a longer answer, instead of using it as a conversation-ender.)

Change Your Beliefs in Small Steps

I liked the way she used a very down-to-earth example to frame the idea of changing our beliefs bit-by-bit. She calls this “the belief-updating style of a superforecaster”:

You’re probably already comfortable with the idea of changing your mind incrementally in some contexts. When you submit a job application, you might figure you have about a 5 percent chance of ultimately getting an offer. After they call you to set up an in-person job interview, your estimate might rise to about 10 percent. During the interview, if you feel like you’re hitting it out of the park, maybe your confidence in getting an offer rises to 30 percent. If you haven’t heard from them for a couple of weeks after your interview, your confidence might fall back down to 20 percent.

What’s much rarer is for someone to do the same thing with their opinions about politics, morality, or other charged topics…

I like the way she points out that this is not some “unnatural” or “robotic” or “overly scientific” behavior. In fact, we all do this – but we’re not good at doing it for strongly held opinions.

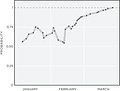

While I like this analogy, I’m not completely sold. In this section, she presents the following graph of how a “superforecaster” updated their belief that a certain event would happen:

But this graph shows an opinion changing over the course of a few months; many of our political beliefs are regarding issues that we’ve been hearing about for years. Just as the superforecaster gets more certain as time goes on, so do the people who get really mad about politics. The problem is just that they get it wrong. So I’m not sure if “willingness to change your mind in small steps” is what separates superforecasters from culture warriors.

Do you really want to know the answer?

I liked this personal anecdote of how seemingly “innocent” questions can hamper communication:

When I used to teach educational workshops, I made a point of checking in with my students to find out how things were going for them. I knew that if a student was confused or unhappy, it was better to find out sooner rather than later, so I could try to fix the problem. Seeking out feedback has never been easy for me, so I was proud of myself for doing the virtuous thing this time.

At least, I was proud, until I realized something I was doing that had previously escaped my notice. Whenever I asked a student, “So, are you enjoying the workshop?” I would begin nodding, with an encouraging smile on my face, as if to say, The answer is yes, right? Please say yes. Clearly, my desire to protect my self-esteem and happiness was in competition with my desire to learn about problems so that I could fix them.

Small changes in wording can really affect the way a question is perceived. Julia Galef’s story focuses on seeking out feedback – if you ask a question that your discussion partner can tell only has one “acceptable” answer, then you learn nothing when they give you that answer.

It strikes me that there’s another aspect to this, too – people feel offended answering rhetorical questions like this. If you know a question only has one answer, then giving that answer makes you feel a loss of control, and we humans all like to be able to feel a sense of control in our lives.

To put it another way, think of Brene Brown’s vulnerability. If we ask a rhetorical question, we’re not being vulnerable (there’s only one acceptable answer, and so there’s no uncertainty), and we’re demanding our conversation partner not be vulnerable, either. The way Galef frames her story supports this – she was trying to “protect [her] self-esteem”.

Similarly, I liked this suggestion – if you’re asking for advice, frame the story in a way that the person you’re asking won’t know which side you were on:

when you ask your friend to weigh in on a fight you had with your partner, do you describe the disagreement without revealing which side you were on?

There’s a few similar stories in pop culture about weeding out the yes-men (like asking your Republican uncle how mad he is about this thing Obama did, then when he agrees, you tell him that it’s actually something Trump did…in this book Galef uses Obama as an example, too: “If someone expressed agreement with a view of his, Obama would pretend he had changed his mind and no longer held that view. Then he would ask them to explain to him why they believed it to be true.”). But I don’t think I’d ever heard this specific version; I’ll have to remember to try it out sometime.

How do you know if you’re the smart one or the dumb one?

Galef suggests asking yourself the following question:

if you never notice yourself [being in the ‘Soldier’ mindset], what’s more likely—that you happen to be wired differently from the rest of humanity or that you’re simply not as self-aware as you could be?

I think this is interesting and difficult: if you never notice yourself taking the ‘soldier’ mindset, there’s two reasons why that could happen: (a) you’re really bad at noticing your thinking patterns, or (b) you’re always in the scout mindset. If you never notice at all, you have no way to tell the difference between (a) and (b). But if you do notice, at least every once in a while, then you at least have a little bit of information: you know you’re doing better than you could have.

Perhaps it can also be phrased: are you using Scout Mindset consciously or unconsciously? If you’re using it unconsciously, it’s great that you’ve trained yourself to be a Scout, but there’s also a danger that you’re really just Soldiering. If you use Scout Mindset consciously, it takes an extra moment or two but you have the certainty of which Mindset you’re using.

Actually, this last paragraph has a close analogy in meditation: really good meditators never notice their thoughts straying because their thoughts never stray. Really poor meditators never notice their thoughts straying because their their thoughts stray so seamlessly. For intermediate meditators, noticing that your thoughts have strayed is actually a good sign: while it’s frustrating that your thoughts have strayed again, it’s better than the alternative of your thoughts straying without you ever noticing.

We see the same problem in politics:

If knowledge and intelligence protect you from motivated reasoning, then we would expect to find that the more people know about science, the more they agree with each other about scientific questions… At the lowest levels of scientific intelligence, there’s no polarization at all—roughly 33 percent of both liberals and conservatives believe in human-caused global warming. But as scientific intelligence increases, liberal and conservative opinions diverge. By the time you get to the highest percentile of scientific intelligence, liberal belief in human-caused global warming has risen to nearly 100 percent, while conservative belief in it has fallen to 20 percent…

From the way I’m talking about polarization, some readers might infer that I think the truth always lies in the center. I don’t; that would be false balance. On any particular issue, the truth may lie close to the far left or the far right or anywhere else.

Just like really poor and really great meditators never notice their minds straying, many people feel very firmly about their political opinions – but some of them must be wrong. Are my political opinions like the novice meditator or the advanced meditator? That’s a question that I still don’t have an answer to.

The Signs of A Scout

Chapter 4 is called “Signs of A Scout”. Here’s the Cliff Notes version, that I wanted to write down so I have it for future reference:

Do you tell other people when you realize they were right?

How do you react to personal criticism?

Do you ever prove yourself wrong?

Do you take precautions to avoid fooling yourself?

Do you have any good critics?

[I’m not sure I’m important enough to have any critics!]

One of the best anecdotes in the book highlights point #3, “Do you ever prove yourself wrong?”: Bethany Brookshire, a science writer posted a tweet after being annoyed by a pattern she noticed one morning:

Monday morning observation:

I have "PhD" in my email signature. I sign my emails with just my name, no "Dr." I email a lot of PhDs.

Their replies:

Men: "Dear Bethany." "Hi Ms. Brookshire."

Women: "Hi Dr. Brookshire."

It's not 100%, but it's a VERY clear division.

But then she went back and analyzed all the similar email exchanges she’d had, and found out (quoting from her blog):

Overwhelmingly, people reply with my first name. “Hi Bethany” makes up 78 percent of all replies (209 people) and is split evenly 50/50 between men and women…

2.6 percent of replies (only 7 people) used “Hi Ms. Brookshire.” [about half men, half women]…

Then we get to “Hi Dr. Brookshire.” This salutation was used by 7 percent of scientists (a total of 19). And more of those people were men than women. 63 percent of people who called me “Dr.” were men, and 37 percent were women.

I’m going to start my own list of Mistakes! (see here, here, here for other people’s examples)